Is this Extra Impressive Than V3?

페이지 정보

작성자 Filomena Doi 작성일25-02-01 17:09 조회3회 댓글0건관련링크

본문

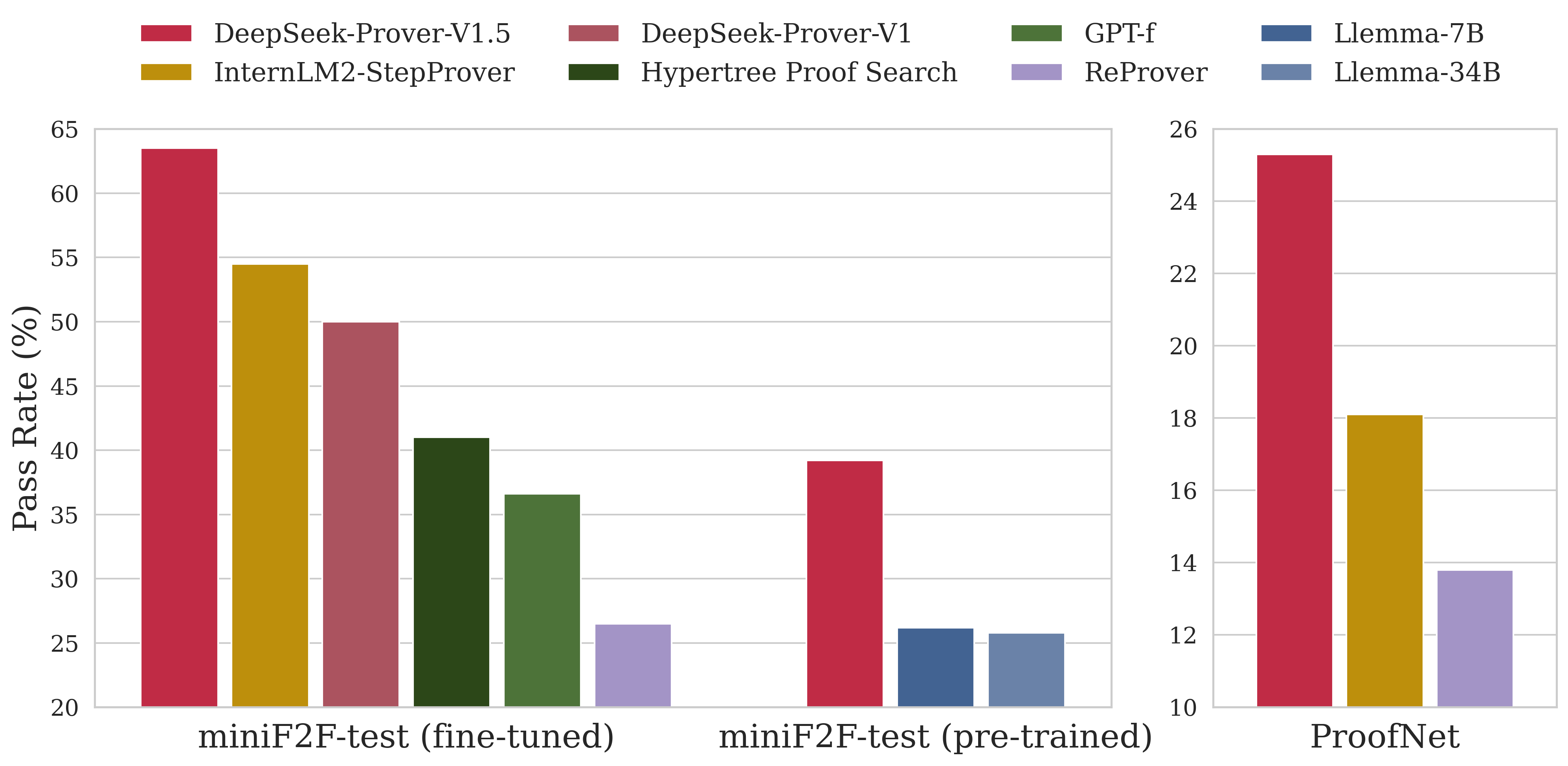

DeepSeek also hires individuals without any pc science background to help its tech better perceive a variety of topics, per The new York Times. We reveal that the reasoning patterns of larger fashions could be distilled into smaller fashions, resulting in higher efficiency in comparison with the reasoning patterns found by way of RL on small models. Our pipeline elegantly incorporates the verification and reflection patterns of R1 into deepseek ai china-V3 and notably improves its reasoning efficiency. Huawei Ascend NPU: Supports running DeepSeek-V3 on Huawei Ascend devices. It makes use of Pydantic for Python and Zod for JS/TS for knowledge validation and helps numerous mannequin suppliers beyond openAI. Instantiating the Nebius model with Langchain is a minor change, similar to the OpenAI client. Read the paper: DeepSeek-V2: A powerful, Economical, and Efficient Mixture-of-Experts Language Model (arXiv). Outrageously giant neural networks: The sparsely-gated mixture-of-specialists layer. Livecodebench: Holistic and contamination free analysis of massive language fashions for code. Chinese simpleqa: A chinese language factuality evaluation for giant language fashions.

Yarn: Efficient context window extension of giant language models. This can be a basic use mannequin that excels at reasoning and multi-flip conversations, with an improved give attention to longer context lengths. 2) CoT (Chain of Thought) is the reasoning content material deepseek-reasoner gives earlier than output the ultimate answer. Features like Function Calling, FIM completion, and JSON output remain unchanged. Returning a tuple: The function returns a tuple of the 2 vectors as its outcome. Why this issues - speeding up the AI manufacturing function with an enormous mannequin: AutoRT exhibits how we can take the dividends of a fast-moving part of AI (generative models) and use these to speed up growth of a comparatively slower moving a part of AI (sensible robots). You can even use the mannequin to routinely task the robots to gather knowledge, which is most of what Google did right here. For extra information on how to make use of this, try the repository. For more analysis details, please test our paper. Fact, fetch, and purpose: A unified evaluation of retrieval-augmented generation.

Yarn: Efficient context window extension of giant language models. This can be a basic use mannequin that excels at reasoning and multi-flip conversations, with an improved give attention to longer context lengths. 2) CoT (Chain of Thought) is the reasoning content material deepseek-reasoner gives earlier than output the ultimate answer. Features like Function Calling, FIM completion, and JSON output remain unchanged. Returning a tuple: The function returns a tuple of the 2 vectors as its outcome. Why this issues - speeding up the AI manufacturing function with an enormous mannequin: AutoRT exhibits how we can take the dividends of a fast-moving part of AI (generative models) and use these to speed up growth of a comparatively slower moving a part of AI (sensible robots). You can even use the mannequin to routinely task the robots to gather knowledge, which is most of what Google did right here. For extra information on how to make use of this, try the repository. For more analysis details, please test our paper. Fact, fetch, and purpose: A unified evaluation of retrieval-augmented generation.

He et al. (2024) Y. He, S. Li, J. Liu, Y. Tan, W. Wang, H. Huang, X. Bu, H. Guo, C. Hu, B. Zheng, et al. Shao et al. (2024) Z. Shao, P. Wang, Q. Zhu, R. Xu, J. Song, M. Zhang, Y. Li, Y. Wu, and D. Guo. Li et al. (2024b) Y. Li, F. Wei, C. Zhang, and H. Zhang. Li et al. (2021) W. Li, F. Qi, M. Sun, X. Yi, and J. Zhang. Qi et al. (2023a) P. Qi, X. Wan, G. Huang, and M. Lin. Huang et al. (2023) Y. Huang, Y. Bai, Z. Zhu, J. Zhang, J. Zhang, T. Su, J. Liu, C. Lv, Y. Zhang, J. Lei, et al. Lepikhin et al. (2021) D. Lepikhin, H. Lee, Y. Xu, deepseek D. Chen, O. Firat, Y. Huang, M. Krikun, N. Shazeer, and Z. Chen. Luo et al. (2024) Y. Luo, Z. Zhang, R. Wu, H. Liu, Y. Jin, K. Zheng, M. Wang, Z. He, G. Hu, L. Chen, et al. Peng et al. (2023b) H. Peng, K. Wu, Y. Wei, G. Zhao, Y. Yang, Z. Liu, Y. Xiong, Z. Yang, B. Ni, J. Hu, et al.

He et al. (2024) Y. He, S. Li, J. Liu, Y. Tan, W. Wang, H. Huang, X. Bu, H. Guo, C. Hu, B. Zheng, et al. Shao et al. (2024) Z. Shao, P. Wang, Q. Zhu, R. Xu, J. Song, M. Zhang, Y. Li, Y. Wu, and D. Guo. Li et al. (2024b) Y. Li, F. Wei, C. Zhang, and H. Zhang. Li et al. (2021) W. Li, F. Qi, M. Sun, X. Yi, and J. Zhang. Qi et al. (2023a) P. Qi, X. Wan, G. Huang, and M. Lin. Huang et al. (2023) Y. Huang, Y. Bai, Z. Zhu, J. Zhang, J. Zhang, T. Su, J. Liu, C. Lv, Y. Zhang, J. Lei, et al. Lepikhin et al. (2021) D. Lepikhin, H. Lee, Y. Xu, deepseek D. Chen, O. Firat, Y. Huang, M. Krikun, N. Shazeer, and Z. Chen. Luo et al. (2024) Y. Luo, Z. Zhang, R. Wu, H. Liu, Y. Jin, K. Zheng, M. Wang, Z. He, G. Hu, L. Chen, et al. Peng et al. (2023b) H. Peng, K. Wu, Y. Wei, G. Zhao, Y. Yang, Z. Liu, Y. Xiong, Z. Yang, B. Ni, J. Hu, et al.

Chiang, E. Frick, L. Dunlap, T. Wu, B. Zhu, J. E. Gonzalez, and that i. Stoica. Jain et al. (2024) N. Jain, K. Han, A. Gu, W. Li, F. Yan, T. Zhang, S. Wang, A. Solar-Lezama, K. Sen, and i. Stoica. Lin (2024) B. Y. Lin. MAA (2024) MAA. American invitational arithmetic examination - aime. Inside the sandbox is a Jupyter server you can control from their SDK. But now that DeepSeek-R1 is out and accessible, including as an open weight release, all these types of management have grow to be moot. There have been many releases this 12 months. One thing to bear in mind before dropping ChatGPT for deepseek ai china is that you will not have the ability to upload pictures for evaluation, generate pictures or use a number of the breakout tools like Canvas that set ChatGPT apart. A standard use case is to complete the code for the person after they provide a descriptive remark. NOT paid to make use of. Rewardbench: Evaluating reward models for language modeling. This technique uses human preferences as a reward signal to fine-tune our models. While human oversight and instruction will remain crucial, the power to generate code, automate workflows, and streamline processes promises to speed up product growth and innovation.

댓글목록

등록된 댓글이 없습니다.