Open Mike on Deepseek

페이지 정보

작성자 Sherlene 작성일25-02-03 11:53 조회4회 댓글0건관련링크

본문

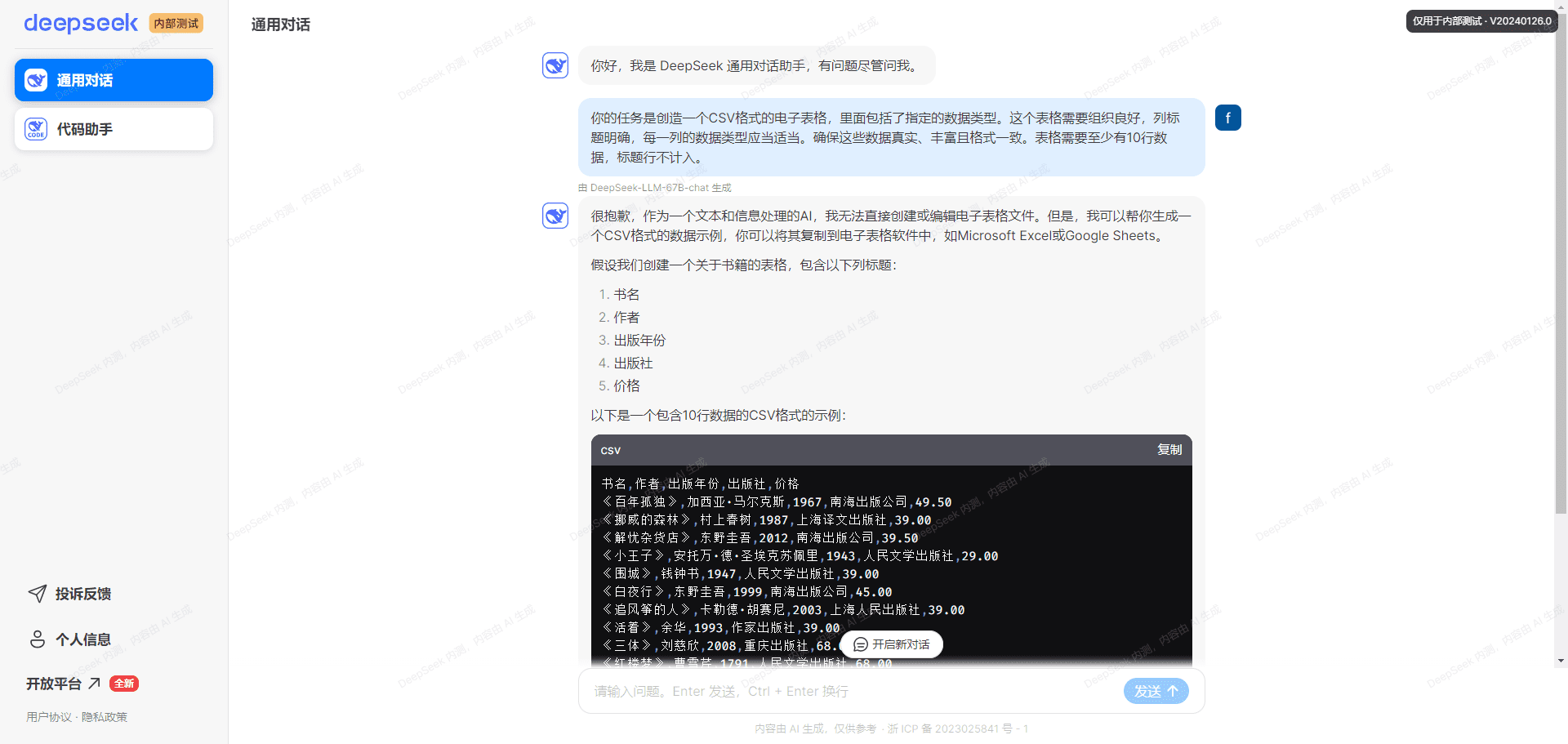

Are you certain you want to cover this remark? The callbacks have been set, and the events are configured to be despatched into my backend. Points 2 and 3 are basically about my financial assets that I haven't got obtainable in the intervening time. These are the three essential points that I encounter. I tried to know how it works first before I'm going to the principle dish. The first drawback that I encounter during this project is the Concept of Chat Messages. Within each function, authors are listed alphabetically by the first name. Those extremely large models are going to be very proprietary and a set of arduous-gained experience to do with managing distributed GPU clusters. However, it isn't hard to see the intent behind DeepSeek's carefully-curated refusals, and as thrilling as the open-source nature of free deepseek is, one needs to be cognizant that this bias will probably be propagated into any future models derived from it.

Are you certain you want to cover this remark? The callbacks have been set, and the events are configured to be despatched into my backend. Points 2 and 3 are basically about my financial assets that I haven't got obtainable in the intervening time. These are the three essential points that I encounter. I tried to know how it works first before I'm going to the principle dish. The first drawback that I encounter during this project is the Concept of Chat Messages. Within each function, authors are listed alphabetically by the first name. Those extremely large models are going to be very proprietary and a set of arduous-gained experience to do with managing distributed GPU clusters. However, it isn't hard to see the intent behind DeepSeek's carefully-curated refusals, and as thrilling as the open-source nature of free deepseek is, one needs to be cognizant that this bias will probably be propagated into any future models derived from it.

Because it would change by nature of the work that they’re doing. The bot itself is used when the mentioned developer is away for work and cannot reply to his girlfriend. I did work with the FLIP Callback API for fee gateways about 2 years prior. I don't actually know how occasions are working, and it turns out that I needed to subscribe to occasions with a view to ship the related occasions that trigerred within the Slack APP to my callback API. To be particular, during MMA (Matrix Multiply-Accumulate) execution on Tensor Cores, intermediate results are accumulated using the restricted bit width. Jog a little bit of my reminiscences when trying to integrate into the Slack. Yes, all steps above were a bit complicated and took me four days with the extra procrastination that I did. Yes, I'm broke and unemployed. 3. Is the WhatsApp API really paid to be used? Its simply the matter of connecting the Ollama with the Whatsapp API. I believe that chatGPT is paid for use, so I tried Ollama for this little undertaking of mine. I pull the DeepSeek Coder model and use the Ollama API service to create a prompt and get the generated response.

A100 processors," in response to the Financial Times, and it's clearly putting them to good use for the good thing about open source AI researchers. Even OpenAI’s closed supply approach can’t prevent others from catching up. I also suppose that the WhatsApp API is paid for use, even in the developer mode. I think that the TikTok creator who made the bot can be selling the bot as a service. I additionally consider that the creator was expert sufficient to create such a bot. Create a bot and assign it to the Meta Business App. Create a system person throughout the business app that's authorized within the bot. Create an API key for the system consumer. For the uninitiated, FLOP measures the amount of computational energy (i.e., compute) required to train an AI system. Both of the baseline models purely use auxiliary losses to encourage load balance, and use the sigmoid gating function with prime-K affinity normalization. Probably the most affect fashions are the language models: DeepSeek-R1 is a mannequin similar to ChatGPT's o1, in that it applies self-prompting to provide an appearance of reasoning. Reinforcement learning. DeepSeek used a big-scale reinforcement learning approach focused on reasoning duties.

If you adored this short article and you would such as to obtain even more details relating to deep seek kindly browse through the page.

댓글목록

등록된 댓글이 없습니다.